In the context of firewalls, the crux of the paradox boils down to whether black holes have smooth horizons (as required by the equivalence principle). It turns out that this is intimately related to the question of how the interior of the black hole can be reconstructed by an external observer. AdS/CFT is particularly useful in this regard, because it enables one to make such questions especially sharp. Specifically, one studies the eternal black hole dual to the thermofield double (TFD) state, which cleanly captures the relevant physics of real black holes formed from collapse.

To construct the TFD, we take two copies of a CFT and entangle them such that tracing out either results in a thermal state. Denoting the energy eigenstates of the left and right CFTs by and

, respectively, the state is given by

where is the partition function at inverse temperature

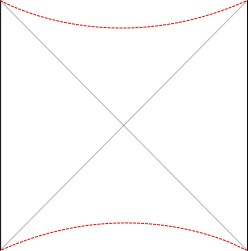

. The AdS dual of this state is the eternal black hole, the two sides of which join the left and right exterior bulk regions through the wormhole. Incidentally, one of the fascinating questions inherent to this construction is how the bulk spacetime emerges in a manner consistent with the tensor product of boundary CFTs. For our immediate purposes, the important fact is that operators in the left source states behind the horizon from the perspective of the right (and vice-versa). The requirement from general relativity that the horizon be smooth then imposes conditions on the relationship between these operators.

A noteworthy approach in this vein is the so-called “state-dependence” proposal developed by Kyriakos Papadodimas and Suvrat Raju over the course of several years [1,2,3,4,5] (referred to as PR henceforth). Their collective florilegium spans several hundred pages, jam-packed with physics, and any summary I could give here would be a gross injustice. As alluded above however, the salient aspect is that they phrased the smoothness requirement precisely in terms of a condition on correlation functions of CFT operators across the horizon. Focusing on the two-point function for simplicity, this condition reads:

Here, is an exterior operator in the right CFT, while

is an interior operator in the left—that is, it represents an excitation localized behind the horizon from the perspective of an observer in the right wedge (see the diagram above). The analytical continuation

arises from the KMS condition (i.e., the periodicity of thermal Green functions in imaginary time). Physically, this is essentially the statement that one should reproduce the correct thermal expectation values when restricted to a single copy of the CFT.

The question then becomes whether one can find such operators in the CFT that satisfy this constraint. That is, we want to effectively construct interior operators by acting only in the exterior CFT. PR achieve this through their so-called “mirror operators” , defined by

While appealingly compact, it’s more physically insightful to unpack this into the following two equations:

The key point is that these operators are defined via their action on the state , i.e., they are state-dependent operators. For example, the second equation does not say that the operators commute; indeed, as operators,

. But the commutator does vanish in this particular state,

. This may seem strange at first sight, but it’s really just a matter of carefully distinguishing between equations that hold as operator statements and those that hold only at the level of states. Indeed, this is precisely the same crucial distinction between localized states vs. localized operators that I’ve discussed before.

PR’s work created some backreaction, most of which centered around the nature of this “unusual” state dependence, which generated considerable confusion. Aspects of PR’s proposal were critiqued in a number of papers, particularly [6,7], which led many to claim that state dependence violates quantum mechanics. Coincidentally, I had the good fortune of being a visiting grad student at the KITP around this time, where these issues where hotly debated during a long-term workshop on quantum gravity. This was a very stimulating time, when the firewall paradox was still center-stage, and the collective confusion was almost palpable. Granted, I was a terribly confused student, but the fact that the experts couldn’t even agree on language — let alone physics — certainly didn’t do me any favours. Needless to say, the debate was never resolved, and the field’s collective attention span eventually drifted to other things. Yet somehow, the claim that state dependence violates quantum mechanics (or otherwise constitutes an unusual or potentially problematic modification thereof) has since risen to the level of dogma, and one finds it regurgitated again and again in papers published since.

Motivated in part by the desire to understand the precise nature of state dependence in this context (though really, it was the interior spacetime I was after), I wrote a paper [8] last year in an effort to elucidate and connect a number of interesting ideas in the emergent spacetime or “It from Qubit” paradigm. At a technical level, the only really novel bit was the application of modular inclusions, which provide a relatively precise framework for investigating the question of how one represents information in the black hole interior, and perhaps how the bulk spacetime emerges more generally. The relation between Tomita-Takesaki theory itself (a subset of algebraic quantum field theory) and state dependence was already pointed out by PR [3], and is highlighted most succinctly in Kyriakos’ later paper in 2017 [9], which was the main stimulus behind my previous post on the subject. However, whereas PR arrived at this connection from more physical arguments (over the course of hundreds of pages!), I took essentially the opposite approach: my aim was to distill the fundamental physics as cleanly as possible, to which end modular theory proves rather useful for demystifying issues which might otherwise remain obfuscated by details. The focus of my paper was consequently decidedly more conceptual, and represents a personal attempt to gain deeper physical insight into a number of tantalizing connections that have appeared in the literature in recent years (e.g., the relationship between geometry and entanglement represented by Ryu-Takayanagi, or the ontological basis for quantum error correction in holography).

I’ve little to add here that isn’t said better in [8] — and indeed, I’ve already written about various aspects on other occasions — so I invite you to simply read the paper if you’re interested. Personally, I think it’s rather well-written, though card-carrying members of the “shut up and calculate” camp may find it unpalatable. The paper touches on a relatively wide range of interrelated ideas in holography, rather than state dependence alone; but the upshot for the latter is that, far from being pathological, state dependence (precisely defined) is

- a natural part of standard quantum field theory, built-in to the algebraic framework at a fundamental level, and

- an inevitable feature of any attempt to represent information behind horizons.

I hasten to add that “information” is another one of those words that physicists love to abuse; here, I mean a state sourced by an operator whose domain of support is spacelike separated from the observer (e.g., excitations localized on the opposite side of a Rindler/black hole horizon). The second statement above is actually quite general, and results whenever one attempts to reconstruct an excitation outside its causal domain.

So why I am devoting an entire post to this, if I’ve already addressed it at length elsewhere? There were essentially two motivations for this. One is that I recently had the opportunity to give a talk about this at the YITP in Kyoto (the slides for which are available from the program website here), and I fell back down the rabbit hole in the course of reviewing. In particular, I wanted to better understand various statements in the literature to the effect that state dependence violates quantum mechanics. I won’t go into these in detail here — one can find a thorough treatment in PRs later works — but suffice to say the primary issue seems to lie more with language than physics: in the vast majority of cases, the authors simply weren’t precise about what they meant by “state dependence” (though in all fairness, PR weren’t totally clear on this either), and the rare exceptions to this had little to nothing to do with the unqualified use of the phrase here. I should add the disclaimer that I’m not necessarily vouching for every aspect of PR’s approach—they did a hell of a lot more than just write down (3), after all. My claim is simply that state dependence, in the fundamental sense I describe, is a feature, not a bug. Said differently, even if one rejects PR’s proposal as a whole, the state dependence that ultimately underlies it will continue to underlie any representation of the black hole interior. Indeed, I had hoped that my paper would help clarify things in this regard.

And this brings me to the second reason, namely: after my work appeared, a couple other papers [10,11] were written that continued the offense of conflating the unqualified phrase “state dependence” with different and not-entirely-clear things. Of course, there’s no monopoly on terminology: you can redefine terms however you like, as long as you’re clear. But conflating language leads to conflated concepts, and this is where we get into trouble. Case in point: both papers contain a number of statements which I would have liked to see phrased more carefully in light of my earlier work. Indeed, [11] goes so far as to write that “interior operators cannot be encoded in the CFT in a state-dependent way.” On the contrary, as I had explained the previous year, it’s actually the state independent operators that lead to pathologies (specifically, violations of unitarity)! Clearly, whatever the author means by this, it is not the same state dependence at work here. So consider this a follow-up attempt to stop further terminological misuse confusion.

As I’ll discuss below, both these works — and indeed most other proposals from quantum information — ultimately rely on the Hayden-Preskill protocol [12] (and variations thereof), so the real question is how the latter relates to state dependence in the unqualified use of the term (i.e., as defined via Tomita-Takesaki theory; I refer to this usage as “unqualified” because if you’re talking about firewalls and don’t specify otherwise, then this is the relevant definition, as it underlies PR’s introduction of the phrase). I’ll discuss this in the context of Beni’s work [10] first, since it’s the clearer of the two, and comment more briefly on Geof’s [11] below.

In a nutshell, the classic Hayden-Preskill result [12] is a statement about the ability to decode information given only partial access to the complete quantum state. In particular, one imagines that the proverbial Alice throws a message comprised of bits of information into a black hole of size

. The black hole will scramble this information very quickly — the details are not relevant here — such that the information is encoded in some complicated manner among the (new) total

bits of the black hole. For example, if we model the internal dynamics as a simple permutation of Alice’s

-bit message, it will be transformed into one of

possible

-bit strings—a huge number of possibilities!

Now suppose Bob wishes to reconstruct the message by collecting qubits from the subsequent Hawking radiation. Naïvely, one would expect him to need essentially all bits (i.e., to wait until the black hole evaporates) in order to accurately determine among the

possibilities. The surprising result of Hayden-Preskill is that in fact he needs only slightly more than

bits. The time-scale for this depends somewhat on the encoding performed by the black hole, but in principle, this means that Bob can recover the message just after the scrambling time. However, a crucial aspect of this protocol is that Bob knows the initial microstate of the black hole (i.e., the original

-bit string). This is the source of the confusing use of the phrase “state dependence”, as we’ll see below.

Of course, as Hayden and Preskill acknowledge, this is a highly unrealistic model, and they didn’t make any claims about being able to reconstruct the black hole interior in this manner. Indeed, the basic physics involved has nothing to do with black holes per se, but is a generic feature of quantum error correcting codes, reminiscent of the question of how to share (or decode) a quantum “secret” [13]. The novel aspect of Beni’s recent work [10] is to try to apply this to resolving the firewall paradox, by explicitly reconstructing the interior of the black hole.

Beni translates the problem of black hole evaporation into the sort of circuit language that characterizes much of the quantum information literature. One the one hand, this is nice in that it enables him to make very precise statements in the context of a simple qubit model; and indeed, at the mathematical level, everything’s fine. The confusion arises when trying to lift this toy model back to the physical problem at hand. In particular, when Beni claims to reconstruct state-independent interior operators, he is — from the perspective espoused above — misusing the terms “state-independent”, “interior”, and “operator”.

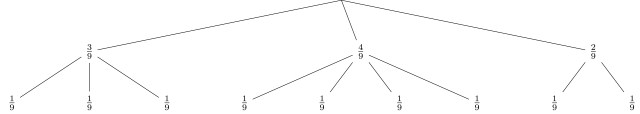

Let’s first summarize the basic picture, and then try to elucidate this unfortunate linguistic hat-trick. The Hayden-Preskill protocol for recovering information from black holes is illustrated in the figure from Beni’s paper below. In this diagram, is the black hole, which is maximally entangled (in the form of some number of EPR pairs) with the early radiation

. Alice’s message corresponds to the state

, which we imagine tossing into the black hole as

. One then evolves the black hole (which now includes Alice’s message

) by some unitary operator

, which scrambles the information as above. Subsequently,

represents some later Hawking modes, with the remaining black hole denoted

. Bob’s task is to reconstruct the state

by acting on

and

(since he only has access to the exterior) with some operator

.

Now, Beni’s “state dependence” refers to the fact that the technical aspects of this construction relied on putting the initial state of the black hole radiation

in the form of a collection of EPR pairs

. This can be done by finding some unitary operator

, such that

(Here, one imagines that is further split into a Hawking mode and its partner just behind the horizon, so that

acts on the interior mode while

affects only the new Hawking mode and the early radiation; see [10] for details). This is useful because it enables the algorithm to work for arbitrary black holes: for some other initial state

, one can find some other

which results in the same state

. The catch is that Bob’s reconstruction depends on

, and therefore, on the initial state

. But this is to be expected: it’s none other than the Hayden-Preskill requirement above that Bob needs to know the exact microstate of the system in order for the decoding protocol to work. It is in this sense that the Hayden-Preskill protocol is “state-dependent”, which clearly references something different than what we mean here. The reason I go so far as to call this a misuse of terminology is that Beni explicitly conflates the two, and regurgitates the claim that these “state-dependent interior operators” lead to inconsistencies with quantum mechanics, referencing work above. Furthermore, as alluded above, there’s an additional discontinuity of concepts here, namely that the “state-dependent” operator

is obviously not the “interior operator” to which we’re referring: it’s support isn’t even restricted to the interior, nor does it source any particular state localized therein!

Needless to say, I was in a superposition of confused and unhappy with the terminology in this paper, until I managed to corner Beni at YITP for a couple hours at the aforementioned workshop, where he was gracious enough to clarify various aspects of his construction. It turns out that he actually has in mind something different when he refers to the interior operator. Ultimately, the identification still fails on these same counts, but it’s worth following the idea a bit further in order to see how he avoids the “state dependence” in the vanilla Hayden-Preskill set-up above. (By now I shouldn’t have to emphasize that this form of “state dependence” isn’t problematic in any fundamental sense, and I will continue to distinguish it from the latter, unqualified use of the phrase with quotation marks).

One can see from the above diagram that the state of the black hole — before Alice & Bob start fiddling with it — can be represented by the following diagram, also from [10]:

where ,

, and

are again the early radiation, later Hawking mode, and remaining black hole, respectively. The problem Beni solves is finding the “partner” — by which he means, the purification — of

in

. Explicitly, he wants to find the operator

such that

Note that there’s yet another language ergo conceptual discontinuity here, namely that Beni uses “qubit”, “mode”, and “operator” interchangeably (indeed, when I pressed him on this very point, he confirmed that he regards these as synonymous). These are very different beasts in the physical problem at hand; however, for the purposes of Beni’s model, the important fact is that one can push the operator (which one should think of as some operator that acts on

) through the unitary

to some other operator

that acts on both

and

:

He then goes on to show that one can reconstruct this operator independently of the initial state of the black hole (i.e., the operator

) by coupling to an auxiliary system. Of course, I’m glossing over a great number of details here; in particular, Beni transmutes the outgoing mode

into a representation of the interior mode in his model, and calls whatever purifies it the “partner”

. Still, I personally find this a bit underwhelming; but then, from my perspective, the Hayden-Preskill “state dependence” wasn’t the issue to begin with; quantum information people may differ, and in any case Beni’s construction is still a neat toy model in its own domain.

However, the various conflations above are problematic when one attempts to map back to the fundamental physics we’re after: is not the “partner” of the mode

in the relevant sense (namely, the pairwise entangled modes required for smoothness across the horizon), nor does it correspond to PR’s mirror operator (since its support actually straddles both sides of the horizon). Hence, while Beni’s construction does represent a non-trivial refinement of the original Hayden-Preskill protocol, I don’t think it solves the problem.

So if this model misses the point, what does Hayden-Preskill actually achieve in this context? Indeed, even in the original paper [12], they clearly showed that one can recover a message from inside the black hole. Doesn’t this mean we can reconstruct the interior in a state-independent manner, in the proper use of the term?

Well, not really. Essentially, Hayden-Preskill (in which I’m including Beni’s model as the current state-of-the-art) & PR (and I) are asking different questions: the former are asking whether it’s possible to decode messages to which one would not normally have access (answer: yes, if you know enough about the initial state and any auxiliary systems), while the latter are asking whether physics in the interior of the black hole can be represented in the exterior (answer: yes, if you use state-dependent operators). Reconstructing information about entangled qubits is not quite the same things as reconstructing the state in the interior. Consider a single Bell pair for simplicity, consisting of an exterior qubit (say, in Beni’s model) and the interior “partner” that purifies it. Obviously, this state isn’t localized to either side, and so does not correspond to an interior operator.

The distinction is perhaps a bit subtle, so let me try to clarify. Let us define the operator with support behind the horizon, whose action on the vacuum creates the state in which Alice’s message has been thrown into the black hole; i.e., let

denote the state of the black hole containing Alice’s message, where the identity factor acts on the early radiation. Now, the fundamental result of PR is that if Bob wishes to reconstruct the interior of the black hole (concretely, the excitation behind the horizon corresponding to Alice’s message), he can only do so using state-dependent operators. In other words, there is no operator with support localized to the exterior which precisely equals ; but Bob can find a state that approximates

arbitrarily well. This is more than just an operational restriction, but rather stems from an interesting trade-off between locality and unitarity which seems built-in to the theory at a fundamental level; see [8] for details.

Alternatively, Bob might not care about directly reconstructing the black hole interior (since he’s not planning on following Alice in, he’s not concerned about verifying smoothness as we are). Instead he’s content to wait for the “information” in this state to be emitted in the Hawking radiation. In this scenario, Bob isn’t trying to reconstruct the black hole interior corresponding to (7)—indeed, by now this state has long-since been scrambled. Rather, he’s only concerned with recovering the information content of Alice’s message—a subtly related but crucially distinct procedure from trying to reconstruct the corresponding state in the interior. And the fundamental result of Hayden-Preskill is that, given some admittedly idealistic assumptions (i.e., to the extent that the evaporating black hole can be viewed as a simple qubit model) this can also be done.

In the case of Geof’s paper [11], there’s a similar but more subtle language difference at play. Here the author means “state dependence” to mean something different from both Beni and PR/myself; specifically, he means “state dependence” in the context of quantum error correction (QEC). This is more clearly explained his earlier paper with Hayden [14], and refers to the fact that in general, a given boundary operator may only reconstruct a given bulk operator for a single black hole microstate. Conversely, a “state-independent” boundary operator, in their language, is one which approximately reconstructs a given bulk operator in a larger class of states—specifically, all states in the code subspace. Note that the qualifier “approximate” is crucial here. Otherwise, schematically, if represents some small perturbation of the vacuum

(where “small” means that the backreaction is insufficient to move us beyond the code subspace), then an exact reconstruction of the operator

that sources the state

would instead produce some other state

. So at the end of the day, I simply find the phrasing in [11] misleading; the lack of qualifiers makes many of his statements about “state-(in)dependence” technically erroneous, even though they’re perfectly correct in the context of approximate QEC.

At the end of the day however, these [10,11,14] are ultimately quantum information-theoretic models, in which the causal structure of the original problem plays no role. This is obvious in Beni’s case [10], in which Hayden-Preskill boils down to the statement that if one knows the exact quantum state of the system (or approximately so, given auxiliary qubits), then one can recover information encoded non-locally (e.g., Alice’s bit string) from substantially fewer qubits than one would naïvely expect. It’s more subtle in [11,14], since the authors work explicitly in the context of entanglement wedge reconstruction in AdS/CFT, which superficially would seem to include aspects of the spacetime structure. However, they take the black hole to be included in the entanglement wedge (i.e., code subspace) in question, and ask only whether an operator in the corresponding boundary region “works” for every state in this (enlarged) subspace, regardless of whether the bulk operator we’re trying to reconstruct is behind the horizon (i.e., ignoring the localization of states in this subspace). And this is where super-loading the terminology “state-(in)dependence” creates the most confusion. For example, when Geof writes that “boundary reconstructions are state independent if, and only if, the bulk operator is contained in the entanglement wedge” (emphasis added), he is making a general statement that holds only at the level of QEC codes. If the bulk operator lies behind the horizon however, then simply placing the black hole within the entanglement wedge does not alter the fact that a state-independent reconstruction, in the unqualified use of the phrase, does not exist.

Of course, as the authors of [14] point out in this work, there is a close relationship between state-dependent in QEC and in PR’s use of the term. Indeed, one of the closing thoughts of my paper [8] was the idea that modular theory may provide an ontological basis for the epistemic utility of QEC in AdS/CFT. Hence I share the authors’ view that it would be very interesting to make the relation between QEC and (various forms of) state-dependence more precise.

I should add that in Geof’s work [11], he seems to skirt some of the interior/exterior objections above by identifying (part of) the black hole interior with the entanglement wedge of some auxiliary Hilbert space that acts as a reservoir for the Hawking radiation. Here I can only confess some skepticism as to various aspects of his construction (or rather, the legitimacy of his interpretation). In particular, the reservoir is artificially taken to lie outside the CFT, which would normally contain a complete representation of exterior states, including the radiation. Consequently, the question of whether it has a sensible bulk dual at all is not entirely clear, much less a geometric interpretation as the “entanglement wedge” behind the horizon, whose boundary is the origin rather than asymptotic infinity.

A related paper [15] by Almeiri, Engelhardt, Marolf, and Maxfield appeared on the arXiv simultaneously with Geof’s work. While these authors are not concerned with state-dependence per se, they do provide a more concrete account of the effects on the entanglement wedge in the context of a precise model for an evaporating black hole in AdS/CFT. The analogous confusion I have in this case is precisely how the Hawking radiation gets transferred to the left CFT, though this may eventually come down to language as well. In any case, this paper is more clearly written, and worth a read (happily, Henry Maxfield will speak about it during one of our group’s virtual seminars in August, so perhaps I’ll obtain greater enlightenment about both works then).

Having said all that, I believe all these works are helpful in strengthening our understanding, and exemplify the productive confluence of quantum information theory, holography, and black holes. A greater exchange of ideas from various perspectives can only lead to further progress, and I would like to see more work in all these directions.

I would like to thank Beni Yoshida, Geof Penington, and Henry Maxfield for patiently fielding my persistent questions about their work, and beg their pardon for the gross simplifications herein. I also thank the YITP in Kyoto for their hospitality during the Quantum Information and String Theory 2019 / It from Qubit workshop, where most of this post was written amidst a great deal of stimulating discussion.

References

- K. Papadodimas and S. Raju, “Remarks on the necessity and implications of state-dependence in the black hole interior,” arXiv:1503.08825

- K. Papadodimas and S. Raju, “Local Operators in the Eternal Black Hole,” arXiv:1502.06692

- K. Papadodimas and S. Raju, “State-Dependent Bulk-Boundary Maps and Black Hole Complementarity,” arXiv:1310.6335

- K. Papadodimas and S. Raju, “Black Hole Interior in the Holographic Correspondence and the Information Paradox,” arXiv:1310.6334

- K. Papadodimas and S. Raju, “An Infalling Observer in AdS/CFT,” arXiv:1211.6767

- D. Harlow, “Aspects of the Papadodimas-Raju Proposal for the Black Hole Interior,” arXiv:1405.1995

- D. Marolf and J. Polchinski, “Violations of the Born rule in cool state-dependent horizons,” arXiv:1506.01337

- R. Jefferson, “Comments on black hole interiors and modular inclusions,” arXiv:1811.08900

- K. Papadodimas, “A class of non-equilibrium states and the black hole interior,” arXiv:1708.06328

- B. Yoshida, “Firewalls vs. Scrambling,” arXiv:1902.09763

- G. Penington, “Entanglement Wedge Reconstruction and the Information Paradox,” arXiv:1905.08255

- P. Hayden and J. Preskill, “Black holes as mirrors: Quantum information in random subsystems,” arXiv:0708.4025

- R. Cleve, D. Gottesman, and H.-K. Lo, “How to share a quantum secret,” arXiv:quant-ph/9901025

- P. Hayden and G. Penington, “Learning the Alpha-bits of Black Holes,” arXiv:1807.06041

- A. Almheiri, N. Engelhardt, D. Marolf, and H. Maxfield, “The entropy of bulk quantum fields and the entanglement wedge of an evaporating black hole,” arXiv:1905.08762

On the left, we have three probabilities

On the left, we have three probabilities