There’s a fundamental problem in gauge theory known as Hilbert space factorization. This has its roots in the issue of how local quantities (e.g., operators) are defined in quantum field theory, and has consequences for everything from entanglement and holographic reconstruction to quantum gravity at large.

In quantum mechanics, one is free to split the Hilbert space into a tensor product

. One can then define states in either subspace via reduced density matrices, and observables as self-adjoint operators acting thereupon. Things are more complicated in QFT, largely as a consequence of the fact that locality itself is far from fully tamed in this framework. Fields are, at the most basic level, simply space-time dependent objects that transform in a particular way under the Poincaré group (e.g., as scalars, vectors, spinors). In canonical quantization, the fields are promoted to operators, and the familiar commutation relations from quantum mechanics are applied. But a significant drawback of this approach is that it naturally relies on the Hamiltonian formalism carried over from quantum mechanics, and therefore requires a preferred choice of time. Insofar as the universe has no such bias, this is obviously unideal, hence the development of the path integral formulation which takes the Lagrangian as fundamental instead. This framework has the advantage of being manifestly covariant, though certain other features (such as unitarity of the S-matrix) are more obscure. Nonetheless, to make clear the role of Hilbert space factorization in field theory, we shall assume the canonical approach.

Upon quantizing, the fields are elevated to operators on Fock space, which is the Hilbert space completion of the direct sum of the (anti)symmetric tensors in the tensor product of single-particle Hilbert spaces:

where is the (anti)symmetrization operator, depending on whether the individual Hilbert spaces describe bosons (symmetrized,

) or fermions (antisymmetrized,

), and in the second equality we’ve implicitly assumed that the Hilbert space is complex. Thus Fock space is the (direct) sum of zero-, one-, two-, and etc-particle Hilbert spaces. The completion requirement (which is usually denoted in the above formula by adding an overbar) is necessary to ensure that this infinite sum converges. The mathematical details will not concern us here, but one important observation is that this encodes the factorization assumption from quantum mechanics at ground-level. For interacting theories, Fock space is at best only an approximation, but suffices for most perturbative calculations. In fact, strictly speaking this fails even for free theories; but this is a somewhat more abstract issue that we postpone to the end of the post. In the standard approach to QFT, Hilbert space factorization for free fields is assumed to go through relatively intact.

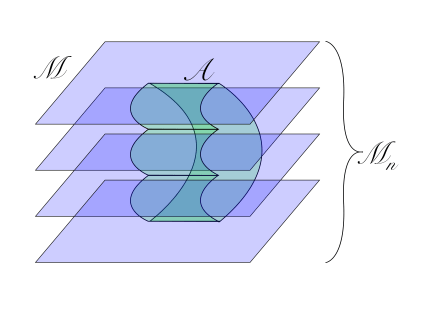

That is, at least, before one introduces gauge fields. The associated gauge constraints (e.g., Gauss’s law) obstruct the factorization of the global Hilbert space. The basic problem is that the elementary excitations for scalar fields are associated with points in space, and in this sense the field operators can be localized to either or

. In contrast, the elementary excitations of gauge fields are associated with closed loops (for

fields, lines of electric flux). These cannot be classified as belonging to either

or

, since the set of loops which belong to both has non-zero measure: loops that cross the boundary between complementary regions violate Gauss’s law if one attempts to restrict to either. Thus the Hilbert space of physical states in gauge theory (that is, the space of states which satisfy Gauss’s law) does not admit a decomposition into a tensor product,

.

To circumvent this, the trick employed in certain lattice models is to enlarge the Hilbert space of physical states to include open strings along the boundary between and

. One simply cuts all the strings that cross the boundary, creating new states that are not invariant under gauge transformations. One then enlarges the original Hilbert space

to include such states. The resulting enlarged space

does factorize into

, but includes states which violate Gauss’s law only at the boundary. This is the so-called minimal extension, since Gauss’s law fails for the minimum number of states in the enlarged Hilbert space.

Note that cutting the boundary results in additional degrees of freedom that emerge as a result of the factorization. One further expects that these d.o.f. should contribute to the entanglement entropy between and

. This hints at an important implication for emergent spacetime and leading area-dependence of entanglement entropy. See, for example, the closely related “edge modes” of Donnelly and Wall.

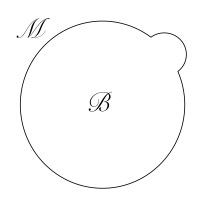

Of course, violating Gauss’s law is hardly a price we want to pay for a factorizable Hilbert space. Harlow’s idea that the gauge field is emergent is thus particularly elegant in this regard. In his 2015 paper, he considers the case of a gauge symmetry associated with a vector potential

in the bulk. In the vacuum state, reconstruction of gauge-invariant operators in the CFT can be accomplished by the choice of a suitable dressing, in particular Wilson lines which end on charged operators on the boundary. In sufficient deviations from vacuum however, the situation becomes more subtle.

Harlow considers in particular the case of AdS-Schwarzschild, specifically the eternal black hole dual to the thermofield double state (TFD). The two boundaries are connected through the bulk by a Wilson line that threads the bifurcation surface. However, it is not clear how to represent this bulk operator in the boundary. The problem is again that cutting the Wilson line (as in any naïve attempt to associate the part in the left- and right-bulk to the corresponding CFT) results in operators which are no longer gauge-independent, and thus violate Gauss’s law. This is precisely the same factorization issue encountered above, but it becomes even more puzzling in the context of AdS/CFT for the following reason: by construction, the global CFT in the TFD — and hence the microscopic Hilbert space of the theory — does factorize into a tensor product, seemingly in defiance of this wormhole-threading Wilson line.

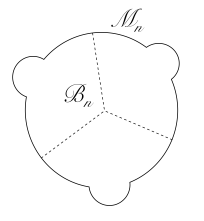

To solve this problem in the case, Harlow proposes an elegant mechanism by which the gauge field itself is emergent. The basic idea is to cut the Wilson line by placing oppositely charged bulk fields at each end of the resulting segments. This allows one to still satisfy Gauss’s law at distances greater than the separation scale of the charges, but one would begin to see deviations if one probed the system at energies above this scale. Translating this into the language of effective field theory, the resulting operator

flows to the original gauge-invariant Wilson line

under the renormalization group. (This assertion relies on the fact that different line operators mix under renormalization group flow). The difference is thus undetectable in sufficiently low-energy states.

This construction has several interesting consequences. Perhaps the most general of which is that it reduces the problem of factorization to a problem in the UV. One could argue that this was foreshadowed by results from lattice gauge theory. As explained above, there is no gauge-invariant way to cut the gauge excitations (the loop operators, i.e., ), and thus the microscopic Hilbert space does not factorize. It thus appears that AdS/CFT requires a different sort of UV cut-off than that provided by the lattice scale in the former example.

Another implication is that the bulk must contain charged fields which transform in the fundamental representation of . These are precisely the oppositely-charged operators formed at the cut. Since Wilson lines end on local operators, these imply the existence of local operators in the CFT which are charged under the symmetry generated by the current dual to the gauge field. And while the bulk effective field theorist can simply use the (original) Wilson line directly, the field theorist in the boundary must resort to using these operators. But the bulk charges can be quite heavy, provided their separation is smaller that this inverse mass. (Harlow demonstrates this in the course of proving that low-energy correlation functions are unaffected, via a “gauge-covariant operator product expansion” (or “string OPE”)). These heavy fields are dual to high-dimension operators in the CFT, and thus the aforementioned boundary theorist must consider high-energy states. This is another manifestation of the UV nature of the factorization problem as argued above. (Harlow resolves the tension between these high-energy states in the CFT and the low-energy effective field theory in the bulk by observing that the “high energy goes into creating a weakly curved background (away from black hole singularities), rather than any localized high-energy scattering process”).

As an aside, Harlow connects this construction to the weak gravity conjecture by observing that the charges cannot be so heavy as to form black holes. Indeed, a weakly-coupled gauge theory in the bulk requires the charges to be parametrically lighter than the Planck scale. This seems to demand the existence of a fundamentally-charged particle of mass , where

is the gauge coupling. This may have implications for emergence, particularly in the gravitational case, but I shall not digress upon it here.

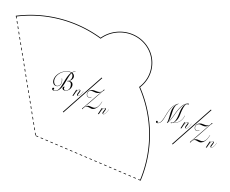

Harlow’s analysis demonstrates that in the case of gauge theory, the factorization problem — in this case, the fact that there must exist a decomposition of the wormhole-threading Wilson line that respects the factorization into separately gauge-invariant CFTs on the boundary — can be completely resolved in effective field theory. One obtains an emergent gauge field at long distances, but a factorizable Hilbert space at short distances. But, as Harlow himself notes, this construction should not necessarily be taken to have ontic status. Indeed, the analagous problem in gravity does not appear amenable to the same mechanism, since we are restricted to

. Unless we wish to resort to tachyons, it is therefore unclear how to split the gravitational dressing in such a way as to preserve diffeomorphism invariance. Indeed, the situation in gravity is even worse: since any localized excitations (including operators with

) carry positive energy, their introduction violates Gauss’s law (more specifically, the Hamiltonian and shift constraints) in the entire spacetime. Thus, while Harlow is probably correct in asserting that “any solution to the factorization problem will teach us something about high-energy physics in the bulk” (due to the UV connection mentioned above; in the gravitational case, it presumably enters as some sort of short-distance modification of the constraints), I am less optimistic that the resolution in quantum gravity will be a straightforward generalization of the same mechanism.

That said, the underlying lesson above still holds: the Hilbert space of the CFT dual to the wormhole does have a tensor product structure, which must be respected by the physics in the bulk. Extrapolating this same reasoning to the gravitational case (ignoring certain technical differences), this ultimately implies that the bulk spacetime must emerge in a manner consistent with this factorization. This is perhaps the most concrete sense in which the problem of Hilbert space factorization is intimately connected with emergent spacetime.

This echos my own conclusions based on holographic shadows, insofar as the wormhole’s deep interior (which I shall define momentarily) is precisely analagous to the one-sided shadow regions described in the above-linked paper, in that it lies beyond the reach of any known bulk probe. The connection between the two can be seen by starting from the TFD, and sending in shocks to create a wormhole (note that in the discussion of Harlow’s work above, the “wormhole” consisted entirely of the bifurcation surface, and had no interior in the sense that we describe here). Upon sending in the second (suitably arranged) shockwave, we obtain a region which is causally disconnected from both boundaries. I refer to this as the wormhole’s deep interior. It shares the basic feature of both holographic shadows and precursors, in that the information about the spacetime therein must be encoded in a highly non-local (indeed, apparently non-causal) manner in the boundary. But relative to both shadows and precursors, addressing the question of reconstruction in the context of multi-shock wormholes has two advantages:

- Conceptually, it makes the problem as sharp as possible. In the case of both shadows and precursors, one might suppose that the information in the CFT is encoded non-locally, such that if one had perfect knowledge of the entire boundary — and perhaps some sophisticated quantum secret sharing scheme — one could in principle reconstruct regions arbitrarily deep in bulk, since these are still causally connected to the boundary. In contrast, the wormhole’s deep interior is disconnected from the boundary for all time, which forces us to consider more subtle or esoteric alternatives.

- By starting with a completely well-behaved geometry and perturbing it with thermal-scale operators, one might hope to track the breakdown of locality to some deeper feature, such as the connection to complexity proposed by Susskind and collaborators, rather than simply attributing it to the initial state of the system (e.g., the particular matter distribution that forms the shadow, the choice of precursor encoding).

However, the causal disconnection of the deep interior is a double-edged sword: despite these conceptual advantages, it is even less clear how one might relate the interior to elements in the CFT. One might imagine starting in the TFD with a Wilson loop that threads the bifurcation surface, but it’s not obvious that it would survive the highly boosted shockwaves that create the wormhole, and even less clear how to use it to construct the gravitational degrees of freedom of interest anyway. Nonetheless, insofar as the Wilson line serves as a diagnostic of connectivity in the bulk, some recent work has focused on attempting to isolate the associated gravitational degrees of freedom as those that “sew the wormhole together”. (On this note, the statement above that the deep interior is disconnected from the boundary for all time comes with the caveat that information about the connection (whatever this means) wasn’t destroyed or otherwise hidden by the shockwave). Another approach would be to understand the boundary dual of entwinement surfaces, and attempt to use them to probe within the wormhole—but while these penetrate holographic shadows, it’s not clear that the wormhole will swallow them. Yet a third method would be to connect with the holographic complexity proposals of Susskind and collaborators, but despite initial progress in defining complexity in field theories, we’re far from a useful CFT prescription.

Understanding the wormhole’s deep interior in the CFT is tantamount to isolating the degrees of freedom that sew the spacetime together. Thus one expects that their description in the CFT will tell us how this spacetime — i.e., gravity — emerges from elements in the boundary. This is the question to which we alluded above, when we claimed that Hilbert space factorization is intimately connected to emergent spacetime. But, while the case merely illustrates that the bulk must somehow emerge in a manner consistent with the tensor product structure of the TFD, understanding precisely how this comes about — that is, how spacetime itself emerges, and not just the gauge fields — will require a solution of the factorization problem in the gravitational case (as well as, very likely, of the deeper problem we’ve been postponing; see below). Whether such a resolution amounts to solving quantum gravity, or only providing a crucial step along the way, remains to be seen.

Now, as alluded near the beginning of this post, one runs into trouble even before introducing gauge fields: Hilbert space factorization for free field theories is a lie. Indeed, it is generally believed (by the measure-zero subset of philosophically-inclined physicists who study the issue) that the description of observables as self-adjoint operators on Hilbert space is rigorously untenable. Rather, observables are identified as elements of an abstract -algebra

, on which states act as positive linear functionals. The pertinent issue is one of identifying — or, more to the point, localizing — degrees of freedom. Rather than associating a Hilbert space to spatial regions, as in the textbook approach above, one assigns algebras to regions, and recovers Hilbert space via something called the GNS construction. The problem is that the types of algebras that correspond to quantum field theories are Type III von Neumann algebras, which are characterized by an infinite degree of entanglement that prevents the Hilbert space from factorizing even before introducing gauge fields. (Incidentally, there has been some work along these lines in the present context, for example in lattice gauge theories by Casini and collaborators, who argued that a tensor factorization only exists in the case of local algebras with trivial center). Thus our reference to “the” factorization problem above was somewhat superficial: while adding gauge fields certainly compounds the problem, the latter remains in their absence. Such considerations suggest that in fact localizationis more fundamental than factorization; the former implies the latter. We shall return to this algebraic quantum field theory (AQFT) approach in other posts, but suffice to say one should be cautious of the foundation on which one builds. It may not be bedrock.

The importance of the factorization problem in this (greater) context can be seen, for example, in the Firewall paradox. In particular, most papers on the subject assume (implicitly, dare I say blithely), that the Hilbert space factorizes into an interior and exterior. I’ve expounded upon this issue elsewhere, so I won’t dwell upon it here. Suffice to say it touches on the question of ontology vs. epistemology which I’ve mentioned before, but the fact that Hilbert space factorization is an independently subtle issue makes it even more difficult to determine to which category a particular model of evaporation belongs.

Having said all that, my basic concern regarding Hilbert space factorization in quantum gravity can actually be summarized quite simply. In the emergent spacetime paradigm — as embodied by the It from Qubit collaboration — entanglement is thought to play a fundamental role in the emergence of gravity. And yet step zero of defining entanglement entropy, , is the assumption of a factorized Hilbert space! But we know this doesn’t even hold for free fields, let alone in gauge or gravitational theories. Therefore, in the absence of a serious reconceptualization of one or both of the constituent theories (or a very clever generalization of Harlow’s emergent gauge field construction), the idea that “gravity emerges from quantum entanglement” rests on a contradiction.

It is worth pointing out that the factorization problem may not have a resolution within quantum field theory, perhaps due to these or other foundational issues. Indeed, Harlow states that it can’t be resolved in perturbative string theory, where “the gauge fields and gravity emerge together from a non-local theory.” Such a solution must exist if the arguments from AdS/CFT above hold true, but it is not clear how to describe the emergence of gauge fields in string theory in such a way as to allow it.